Twenty-plus years ago, I got my first job as an actual, card-carrying linguist, working for a company that did things with big collections of linguistic data, using them to improve computer programs that did speech recognition, i.e. figuring out what words a person is saying.

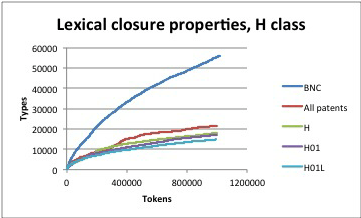

One fine day the people that gave us the vast majority of our income sent their big-collection-of-linguistic-data specialist to visit us. We demonstrated to him the computer program that we had built to answer the question how can you tell when a big collection of linguistic data is big enough? We pointed out how to spot the tell-tale sign on a graph that means “it’s big enough.” “Oh, that just means that linguistic data is bursty.”

What did he mean by “bursty?” We had a guess, but weren’t exactly sure, and given that his company paid us a lot of money and he was their expert, my boss thought it best not to push back. A few months later, they declined to renew our contract, and our owner laid everyone off and went away to do something else. Was it because we didn’t push back on the big-collection-of-linguistic-data expert’s dismissiveness? Probably not–our little company committed far bigger errors, and on a sadly regular basis. Whatever–the job market for computational linguists was not terrible in those days (it’s pretty wonderful now), and I found my second job as an actual, card-carrying linguist pretty quickly. But: burstiness is pretty important, and it continues to bump into my life today, in various and sundry ways, some of which will be of interest to readers of this blog.

What burstiness means: per Wikipedia,

In statistics, burstiness is the intermittent increases and decreases in activity or frequency of an event.

Wikipedia, Burstiness

In plain English: burstiness is present when something doesn’t happen for long periods of time, but then happens a lot, and then goes back to not happening very often. Some things that have this characteristic: hurricanes, and pandemics. Statisticians care about burstiness because bursty things are difficult to characterize with normal statistics, so you have to come up with new techniques to work with them; people like disaster planners and public health experts care about those statistics because it is difficult to predict, and therefore to plan for, things that have weird statistical properties.

From a computational linguist’s perspective, burstiness is important because in big collections of language, you don’t see new words very often, but when you do, sometimes you see a lot of them at once. If you’re trying to do something like build a dictionary for a computer program, you typically do that by finding all of the words in a big collection of linguistic data. But, how do you know when your collection of linguistic data is big enough? See above; the problem is that if you kept growing the collection, you know that there will be bursts of new words, but you can’t keep growing your collection forever–at some point, you have to stop and work with what you have at hand.

Many of our dear fellow readers are engaged in learning a language that they don’t already speak. I am one of them–if you have been reading this blog for a few years, you have followed my feeble attempts to learn la langue de Molière, also known as “French.” By now I know the language well enough that I can pick up a book in it and not have to turn to a dictionary very often. But, when I do, it typically happens like this…

Right at this moment, I’m reading Paris brûle-t-il ?, “Is Paris burning?,” the work of reference on the liberation of Paris. I typically get through about three pages before I have to look up a word. But, then this morning, I’m reading about the French 2nd Armored Division rolling from Normandy to Paris when I come across this sentence. I had to look up all of the words in bold face:

Glissant en silence sur leurs six roues de caoutchouc, les automitrailleuses des spahis à calots rouges, “chiens de chasse” de la division, ouvraient la marche.

Dominique Lapierre and Larry Collins, Paris brûle-t-il ?, published by Robert Laffont in 1964.

- l’automitrailleuse: a light armored vehicle.

- le spahi: native cavalry trooper of the Maghreb.

- le calot: garrison cap in English; when I was in the Navy, we called them “cunt caps.” A calot has no brim or visor, and therefore can be folded flat and tucked under the epaulet of a military jacket.

After that, it was back to my normal rate: about one word every three pages. That certainly counts as “not very often,” and is pretty good for a non-native speaker. To then jump to three words in a single sentence, and then go back to my base rate of one word every three pages, is a good example of burstiness. Once again, we see why one might right a blog like this one–a blog about the statistical properties of language and their implications for people who are trying to learn one. What happened to the dismissive big-collections-of-linguistic-data expert? I don’t know for a fact, but I do know that people who are dismissive of the opinions of others don’t typically have much professional success. Personally, I took what I learned from the experience of working at a failed software start-up to do a better job of being a computational linguist, and have had a wonderfully fun time with it. Want to try a career in computational linguistics yourself? Start here if you are not a graduate student, or here if you are, and I hope you have as much fun with it as I have!

French notes:

Despite what its name would lead one to think, an automitrailleuse does not necessarily carry a machine gun. Here some pictures of modern automitrailleuses. You’ll notice that some of them look a lot like tanks. The salient differences are that (1) they weigh less, and (2) they have wheels, not treads.

In the graph, there are two Blue lines and none are really “flat” although I can see the bursts. What prevents more bursts? (Also check “right” vs “write” — I’m guessing to dictated part of this and the language model sucks because you dictated to Siri.)

LikeLiked by 1 person

YOU’RE asking ME what prevents more bursts?? (“K in Colorado” is an expert in this kind of statistical modeling of language.) Of course, NOTHING prevents more bursts, which is why relying on the graph flattening out is risky, and you need more sophisticated models if it’s really important to you to anticipate them. When you’re building statistical language models, though, you usually have bigger problems to worry about–you’re more likely to be limited by how much storage space you have than by missing a burst, for example.

LikeLike

Buy more memory or storage! 😉

I suppose ultimately there is no way to avoid this problem. A new burst is always around the corner, and you can’t detect it in the data. But there are ways to detect it in operation. Unfortunately, I rarely see any such effort. Two approaches that come to mind: (1) Model being out-of-model. Build a language model incrementally such that burstiness is encountered, separately detected, and recognized in model parameters. (2) Measure and model confidence. Back in my day, we had both acoustic and language models and likelihood was a weighted linear mixture of the two. One measure of confidence was to introduce jitter, observing how predictions changed when changing the weights. These days, models are bigger black boxes, but there are ways of introducing jitter. Where jitter caused volatile predictions, confidence was low.

Modern dictation (e.g., on one’s iPhone) often flags uncertain segments of resulting text. However, all too often iPhone dictation blows right past missing vocabulary, never flagging even the least likely transcribed speech. E.g., “Einkorn” (an heirloom wheat) is transcribed as “I am corn” despite encountering “Einkorn” earlier in the dialog and despite the unlikeliness of “I am corn.” Suppose iOS actually recognizes its vocabulary deficiency (perhaps by iOS version 29?). Is there any way to grow the vocabulary?

Was one time its speller and could learn new words, especially proper nouns and other names. Not any longer. Even names in my address book! Even though I exclusively used “Nicky” as the spelling of my cat’s name, iOS insisted on transcribing it as “Nikki” despite hundreds of manual corrections. Spelling out “Candice” gets the following suggested completions: “Can, Check”, then “Can, Call:, then “Can’t and Canning”, then “Candle” and “Candles” — be still my heart! — and finally with only two more characters to go, “Candidates” and — behold! — “Candice.”

Seems as it we are regressing. iOS 15 is reportedly out today. Probably some new emojis. Have a good day.

LikeLiked by 1 person

Jitter is a good idea. I think of it as a form of metamorphic testing, a technique for cases where a program or process is so opaque that you can’t write normal tests for it—given some input X, expect *specific* output Y. The idea behind metamorphic testing is that you make some change to the input such that you know in *general* what *kind* of change you should see. For example, suppose that you have a program that calculates the mean of a bunch of data points. You give it dataset A and it returns a mean of M. Then you multiply the values in A by 2, and feed THAT to the program. Your new mean should be double the first one.

LikeLike

I learned a lot from this blog post! Thanks!

LikeLiked by 1 person

I guess I can’t leave a reply to a reply to a reply. So, more on jitter…

Lin Chase experimented with it for Wayne Ward using the old Sphinx speech recognizer — a great tool for experimentation. I did some additional work when at Berdy and working with Wayne, presenting it at Eurospeech several ages ago.

Instead of input jitter, Lin used parameter jitter. In Sphinx that was terribly easy because there was one parameter (language model weight) that was terribly easy to fiddle with. In today’s speech recognition, the black box ain’t so easy to twiddle. But not impossible. I can imagine, for example, adding a tiny bit of noise to network weights, possibly at different levels in the hierarchy, substituting the twiddled network outputs for the standard outputs, and seeing what changes. I’m sure someone is already doing that.

LikeLike