Watch a movie like Arrival and you’ll get the impression that linguists spend their professional lives sitting around speculating about Sanskrit etymologies and the nature of the relationship between language and reality. I’m not saying that we never do such things, but, no: that’s not what we do with our typical workdays. I’m a computational linguist, which among other things means that what I do involves computers, which among other things means that I spend a certain amount of my time sitting around writing computer programs that do things with language. Often, those programs are doing things that do not look very…exciting. Not to the untrained eye, at any rate.

For other glimpses into the daily life of computational linguists, click here.

Case in point: yesterday I wanted to see how the statistical characteristics of language are affected by different decisions about what you consider a “word.” You would think that the word “word” would be easy to define–in fact, not only do linguists not agree on what a word is, but you would have a hard time getting all linguists to agree that words even exist. (One of the French-language linguistics books that I have my nose stuck in the most is Maurice Pergnier’s Le mot, “The Word.” The first 50 pages (literally) are devoted to theoretical controversies around the question of whether words actually exist–or not. Want a good English-language discussion of the issues? See Elisabetta Jezek’s The lexicon: An introduction.)

So, yesterday I got to thinking about one of the questionable cases in English: contracted negatives of modal verbs. Here’s what that means.

In English, there is a small number of frequently-occurring verbs that can (and do) get negated not by a separate word like no, but by adding a special ending, spelled -n’t:

- is/isn’t

- did/didn’t

- have/haven’t

- could/couldn’t

- would/wouldn’t

- does/doesn’t

Note that British English has another form:

I’ve not

…which means I haven’t.

Now, if you care about statistics, you care about counting things. Think about how you would count the numbers of words in these examples:

- I want to go.

- I do want to go.

- I do not want to go.

- I don’t want to go.

(3) and (4) are both perfectly acceptable ways of negating (1) and (2). How would they affect a program that counts the number of words? It depends. Here are the straightforward cases: if (1) has four words (I, want, to, and go), then (2) has five (add do to the previous four), and (3) has 6 (add not to the previous five).

The questionable case is (4). You could make a reasonable argument that don’t is a single word. You also could make a reasonable argument that don’t should be counted as two words. But, which two words? A reasonable person could propose do and n’t–just split the “stem” do from the negative n’t.

Fine. But, let’s look at a little more data:

- I will go.

- I will not go.

- I won’t go.

- I can go.

- I cannot go.

- I can’t go.

Clearly (1) has three words–I, will, and go. … (2) adds one more, with not. What about (3), though? Is it inconsistent to count will not as two words, but won’t as one? Maybe. If you’re going to split it into two “words,” what are they? Presumably wo and n’t? But, what the hell is wo? Is it the same “word” as will? Notice that we’ve now had to start putting “word” in “scare quotes,” which should tell you that knowing what, exactly, a “word” is isn’t quite as simple as it might appear at first glance. Think about this: in science you need to know what it is, exactly, the thing that you’re studying, which implies that you can recognize the boundary between one of those things and another.

What’s the right answer? Hell, I don’t know. I do know this, though: if you’re interested in the statistics of language (wait–what’s you’re? Hell, what’s what’s?), then you have to be able to count things, so you have to make some decisions about where the boundaries between them are. My issue du moment is actually not choosing between the options, but rather seeing what the consequences of those specific decisions would be for the resulting statistical measures, so I need to be able to test the effects of different ways of splitting things up (or not), so I need to write some code…

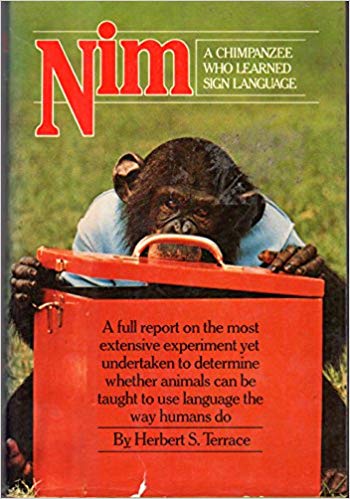

What you see below is me using a computational tool called a “regular expression” to find words that have a negative thing attached at the end (e.g. n’t) and separate the negative thing from the rest of the word. So, given an input like didn’t, I want my program to (1) recognize that it has a negative thing at the end, and (2) split it into two parts: did, and n’t. Grok (see the English notes for what grok means) the code (code means “instructions in a programming language”–here I’m using one called Perl), and then scroll down past it for an explanation of how it illustrates a piece of advice that I often give to students…

# this assumes input from a pipe...

while (my $line = <>) {

print "Input: $line";

# this doesn't work--why?

#$line =~ s/\b(wo|ca|did|could|should|might)(n't)\b$/\$1 $\2)/gi;

# works...

#$line =~ s/(a)(n)/a n/gi;

# this does what I want...

#$line =~ s/(a)(n)/$1 $2/gi;

# works...

#$line =~ s/(ca)(n't)/$1 $2/gi;

# works...

#$line =~ s/(ca|wo)(n't)/$1 $2/gi;

# works...

#$line =~ s/\b(ca|wo)(n't)\b/$1 $2/gi;

# works...

#$line =~ s/\b(ca|wo|did)(n't)\b/$1 $2/gi;

# works...

#$line =~ s/\b(ca|wo|did|could)(n't)\b/$1 $2/gi;

# works...

#$line =~ s/\b(ca|wo|did|could|should|might)(n't)\b/$1 $2/gi;

# works...

#$line =~ s/\b(ca|wo|did|had|could|should|might)(n't)\b/$1 $2/gi;

# works...

#$line =~ s/\b(ca|wo|did|had|have|could|should|might)(n't)\b/$1 $2/gi;

# works...

#$line =~ s/\b(ca|wo|do|did|has|had|have|could|should|might)(n't)\b/$1 $2/gi;

# and finally: this pretty much looks like what I started with, but

# what I started with most definitely does NOT work... what the fuck??

$line =~ s/\b(ca|wo|do|did|has|had|have|would|could|should|might)(n't)\b/$1 $2/gi;

print $line;

} # close while-loop through input

The “regular expressions” in this code are the things that look like this:

s/\b(wo|ca|did|could|should|might)(n't)\b$/\$1 $\2)/gi

…or, in the case of a much shorter one, like this:

s/(a)(n)/a n/gi

(Note to other linguists: yes, I know that technically, the regular expression is just the part between the first two slashes, i.e. the underlined part s/(a)(n)/a n/gi in the second example. Don’t hate on me–I’m trying to make this at least somewhat clear.) The lines that start with # are my notes to myself—the “reading between the lines” that you have to do to see how irritating it can be to troubleshoot this kind of thing.

A regular expression is a way of describing a set of things. What makes it “regular”–a mathematical term–is that those things can only occur in a very limited number of relationships. In particular, that limited number of relationships do not include some phenomena that are very important in language, such as agreement between subjects and verbs–think of Les trois soeurs de ma grand-mère m’ont toujours aimé, “my grandmother’s three sisters have always loved me.” The issue here is that regular expressions can only describe sequences of things that you might think of as “next to” each other; les trois soeurs is separated from the verb avoir, which must be in the third person plural form ont, by ma grand-mère, which would require the third person singular form a. (Linguists: I know.)

Regular expressions, and the “regular languages” that they can describe, became of importance in linguistics when B.F. Skinner (yes, the famous psychologist) wrote a book about the psychology of language in which he suggested that they can describe human languages from a mathematical perspective. This claim caught the attention of one Noam Chomsky, who wrote a book review pointing out the inadequacy of the idea of regular expressions as a description of human language. The review brought him a lot of notice, and he went on to develop the ideas in that review into the most widespread and influential linguistic theory since the Tower of Babel. Today, if you’ve only heard of one linguist, it was almost certainly Chomsky.

Chomsky’s critique of “regular languages” included the observation that there are perfectly natural things that can be said in any human language that can’t be described by a regular language. For example:

Me, my brother, and my sister went to William and Mary, Indiana University, and Virginia Tech, respectively.

The problem that this illustrates for regular languages is that they don’t have a mechanism for accounting for the fact that you can have sentences where you have a list of things in an early part of the sentence, and then must have a list of things of the same length in a later part of the sentence. Don’t believe me? Go read a book on “formal languages,” and then try it.

Linguistic geekery

Regular expressions are pretty natural tools for people who work with textual data, and they’re especially natural for linguists. This is a surprise to a lot of computer scientists, some of whom are masters of regular expressions, but some of whom find them irritatingly bewildering. It turns out that if you take a course on the “formal foundations” of linguistics, i.e. its groundings in logic and set theory, you will run across regular languages, which fact makes regular expressions pretty easy to learn. And, for textual data, they are really useful even despite their limits–so much so that a programming language (named Perl) was created expressly for the purpose of making it easy to use regular expressions to “parse” textual data. So, when I found myself wanting to be able to rip through a bunch of textual data and find the negative things like n’t, Perl and its regular expressions were a logical choice.