I occasionally use this blog to try out materials for something that I will be publishing. This post is a casual version of something that will go into a book that I’m writing about…writing.

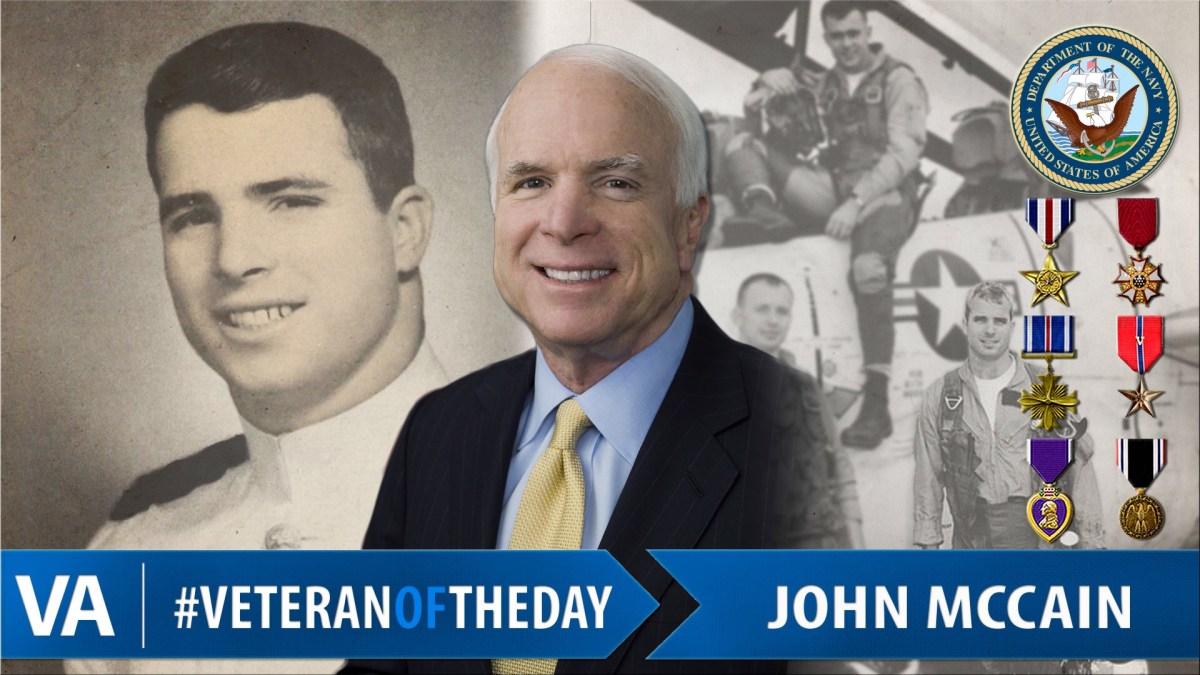

So, you’re going to do a data science project. Maybe you’re going to use natural language processing (processing: using a computer program to do something; natural language: human language, as opposed to computer languages) to analyze social media data because you want to find out how veterans feel about the medical care that they receive through the Veterans’ Administration. (Spoiler alert: a number of my buddies are vets, and they do indeed use the Veterans’ Administration health care system, and they both (a) are happy with it, and (b) recommend it to the rest of us.) Maybe you’re doing it as a project for a course; maybe you’re doing it as your first assignment at your high-paying brand-new data scientist job; maybe you’re planning to write a research paper for a journal on military health care. How do you go about doing it?

An excellent piece of advice when you’re trying to figure out how to do any research project: write out what you’re going to do, in prose, before you start doing it. As my colleague Graciela Gonzalez, of the Health Language Processing Laboratory at the University of Pennsylvania School of Medicine, puts it:

Most of us make some mistakes in the process of thinking through how we will test our hypothesis. The advantage of writing down what you’re going to do–the Methods section of a research paper, the design of your research project–before you do it is that when you see it on paper, spelled out explicitly and step by step, you will often notice the logical or procedural errors in what you were thinking, and then you won’t spend weeks making those errors before realizing that they were never going to get you where you wanted to go.

OK, so: you know that you’re going to write out your methods, very explicitly and in the order in which you will do them. But, how do you figure out what those methods should be?

An efficient way to go about this is to read research papers by other people who have done similar things. As you read them, you’re going to look for a general pattern–think of this as an example of the frameworks that we’ve talked about in other parts of this book. Returning to our example of using natural language processing to analyze social media data, you might go to PubMed/MEDLINE, the National Library of Medicine’s database of 27 million biomedical research articles, and search for papers that mention either natural language processing or text mining, and also have the words social media in the title or abstract. (Click here if you would like to see the set of 190+ papers that this search would find.)

The results of that search will return these three papers that are studying a problem similar to yours: they’re using natural language processing to find women talking about their pregnancy, people talking about adverse reactions to drugs, or people talking about abuse of prescription medications–not exactly what you need to do, but similar. You’ll see two steps that are carried out in all of them. I’ve highlighted the points where they’re mentioned in the abstracts of the three papers:

METHODS: Our discovery of pregnant women relies on detecting pregnancy-indicating tweets (PITs), which are statements posted by pregnant women regarding their pregnancies. We used a set of 14 patterns to first detect potential PITs. We manually annotated a sample of 14,156 of the retrieved user posts to distinguish real PITs from false positives and trained a supervised classification system to detect real PITs. We optimized the classification system via cross validation, with features and settings targeted toward optimizing precision for the positive class. For users identified to be posting real PITs via automatic classification, our pipeline collected all their available past and future posts from which other information (eg, medication usage and fetal outcomes) may be mined.

Sarker, Abeed, Pramod Chandrashekar, Arjun Magge, Haitao Cai, Ari Klein, and Graciela Gonzalez. “Discovering cohorts of pregnant women from social media for safety surveillance and analysis.” Journal of medical Internet research19, no. 10 (2017): e361.

METHODS: One of our three data sets contains annotated sentences from clinical reports, and the two other data sets, built in-house, consist of annotated posts from social media. Our text classification approach relies on generating a large set of features, representing semantic properties (e.g., sentiment, polarity, and topic), from short text nuggets. Importantly, using our expanded feature sets, we combine training data from different corpora in attempts to boost classification accuracies.

Sarker, Abeed, and Graciela Gonzalez. “Portable automatic text classification for adverse drug reaction detection via multi-corpus training.” Journal of biomedical informatics 53 (2015): 196-207.

METHODS: We collected Twitter user posts (tweets) associated with three commonly abused medications (Adderall(®), oxycodone, and quetiapine). We manually annotated 6400 tweets mentioning these three medications and a control medication (metformin) that is not the subject of abuse due to its mechanism of action. We performed quantitative and qualitative analyses of the annotated data to determine whether posts on Twitter contain signals of prescription medication abuse. Finally, we designed an automatic supervised classification technique to distinguish posts containing signals of medication abuse from those that do not and assessed the utility of Twitter in investigating patterns of abuse over time.

Weissenbacher, Davy, Abeed Sarker, Tasnia Tahsin, Matthew Scotch, and Graciela Gonzalez. “Extracting geographic locations from the literature for virus phylogeography using supervised and distant supervision methods.” AMIA Summits on Translational Science Proceedings 2017 (2017): 114.

Now we can abstract out the two steps that we found in all three papers:

- The authors built a data set.

- The authors used a technique called classification–a form of machine learning–to differentiate between the social media posts that did and did not talk about a person’s own pregnancy, or an adverse reaction to a medication, or abuse of prescription medications.

So, now you have a basic outline of your methodology. Your goal being to use natural language processing to investigate, using social media data, how veterans feel about the care that they receive through the Veterans’ Administration health care system, maybe your methodology will look like this:

- Create a data set containing tweets in which veterans are talking about how they feel about the care that they receive in the VA health care system.

- Use machine learning to classify those tweets into ones where the vets feel (a) positive, (b) negative, or (c) neutral about that care.

OK, so: now you can expand that. You’re quickly going to realize that Step 2–classifying those tweets–is actually going to require you to be able to do three classifications:

- You have to be able to differentiate tweets written by veterans from tweets written by everybody else.

- You have to be able to differentiate tweets where the vets are talking about the VA health care system from where they’re talking about things other than the VA health care system.

- You have to be able to classify whether the feelings that they express about the VA health care system are positive, negative, or neutral.

Now that you’ve started to flesh out your methodology, you realize something: creating that data set is going to take a really long time, since you essentially have to be able to label three different kinds of things in the social media posts. You have a finite amount of time and resources with which to do it, so how are you going to make that possible?

Faced with an enormous amount of work to accomplish with limited time and resources, the most sane approach is this: go to your supervisor, show them your detailed methods plan, and let them come to the conclusion that they had better either (a) give you a lot more resources, or (b) modify your assignment. Having gone through this multiple times over the course of my career, I can tell you that (b) is a hell of a lot more likely. What is the modified assignment going to look like? It’s probably going to be a reduction of the task to “just” the task of detecting tweets that were and weren’t written by veterans. Now you can go back to your outline, and modify it:

- Create a data set containing tweets written by veterans, and tweets written by anybody else.

- Use machine learning to classify those tweets into the ones that were written by veterans, and the ones that weren’t.

This is going to be hard enough, believe me. Here are some examples of what those tweets might look like–I made them up, but they’re totally plausible:

- HM1 Zipf here, USS Biddle 1980-1982–BT3 Raven McDavid, you out there?

- AFOSC raffle drawing at 1500–win that lawnmower and help us buy books for the squadron?

- FTN today, FTN tomorrow, FTN and fuck Chief Chomsky til I get out this motherfucker

- Mario Brothers, still nothin like it, bitchboys

Have you figured it out? Here are the answers:

- Clearly written by a veteran.

- Almost certainly written by the spouse of an active duty Air Force officer, so not written by a veteran.

- Clearly written by a sailer who is still on active duty, so not written by a veteran.

- No clue who it was written by, and/but there’s no reason whatsoever to think that it was written by a veteran, so it should be classified as not written by a veteran.\

What’s that you say? It wasn’t clear to you at all? Think about this: if it wasn’t clear to you, it’s certainly not going to be clear to a computer program, so your classification step is going to be difficult. In fact, if it’s not clear to you, you’re going to have a hell of a difficult time building the data set–time to go back to your supervisor and ask for the resources to hire some veterans to help you out!

…and (4) raises a super-difficult question: what the hell counts as a reasonable experimental control for this research project? (Spoiler: I don’t know, and I have a doctoral degree in this particular topic.)

All of this to say:

- Your redefined project is going to be plenty hard, thank you very much.

- You wouldn’t know how crucial it was to redefine said project if you hadn’t started the process of writing out what exactly you’re going to do.

…and hell–you hadn’t even gotten to the “exactly” part yet! So: take Graciela’s point seriously, and write some things down before you start doing anything else.

…and now you can think about what you’re going to measure to figure out whether or not you were successful in doing what you were trying to do.

Linguistic geekery: Raven McDavid was a dialectologist back in day. He is said to be the inspiration for the Harrison Ford character in Raiders of the lost ark. Chomsky is Noam Chomsky, the most important (although not the best, in my humble opinion) linguist of the 20th century. Where they appear in the post:

- HM1 Zipf here, USS Biddle 1980-1982–BT3 Raven McDavid, you out there?

- AFOSC raffle drawing at 1500–win that lawnmower and help us buy books for the squadron?

- FTN today, FTN tomorrow, FTN and fuck Chief Chomsky til I get out this motherfucker

- Mario Brothers, still nothin like it, bitchboys’