To be, or not to be- that is the question:

Whether ’tis nobler in the mind to suffer

The slings and arrows of outrageous fortune

Or to take arms against a sea of troubles,

And by opposing end them. To die- to sleep-

No more; and by a sleep to say we end

The heartache, and the thousand natural shocks

That flesh is heir to.

–William Shakespeare, Hamlet

Is a preposition a bad thing to end a sentence with? No: if you want to sound like a native speaker of English, then you need to end sentences with prepositions. In his writing guide The sense of style: the thinking person’s guide to writing in the 21st century, the linguist Steven Pinker‘s take on the alleged rule against ending sentences with prepositions is that “mockery is appropriate.”

If you teach introductory linguistics, you’ve probably had undergraduates show up in your class convinced that there’s actually some problem with ending sentences with prepositions. They never seem to have any clue why, beyond the fact that someone told them so at some point. It’s a belief that puzzles the hell out of linguists, since ending sentences with prepositions is clearly part of the English language–indeed, there are many constructions that require it.

Think for a minute about what the alternative to ending a sentence with a preposition is. There are two options: one for when you’re asking a question, and the other for a non-question. If you’re asking a question–not just any question, but one that uses one of what linguists usually call wh-words or Q-words, like what or where–you can move the preposition to the front of the sentence, preceding the wh-word:

| Normal English option: |

Formal English option: |

| Who are you going to give it to? |

To whom are you going to give it? |

| Where are you going to get it from? |

From where are you going to get it? |

For non-questions, you can make a relative clause, and move the preposition to follow the relativizer:

| Normal English option: |

Formal English option: |

| That’s the store I’m going to buy it from. |

That’s the store from which I’m going to buy it. |

| That’s the guy I’m going to give an ass-kicking to. |

That’s the guy to whom I’m going to give an ass-kicking. |

Linguists call the option that’s more common in formal English pied piping. You might remember the Pied Piper of Hamelin. He was hired to remove all of the rats from a little town in Germany. When the townspeople didn’t pay him, he led all of their children away. Similarly, we think of the wh-word and/or the relativizer as “leading away” the preposition from where it would normally go.

I’ve never really understood how anyone could believe that there’s anything “real” about the don’t-end-a-sentence-with-a-preposition thing. In fact, there are plenty of things that you can’t say in English without a preposition at the end of the sentence. Do you want to take a dip in the pool before lunch? Only if you’re going to. I found a nice one on pemberly.com:

What did you bring that book that I don’t like to be read to out of up for?

I tried to figure out a way to say this with pied piping:

For what did you bring up that book out of which I do not like to be read to?

For what did you bring up that book to which? whom? I do not like to be read out of?

I’m a native speaker, and I can’t come up with a way to do it.

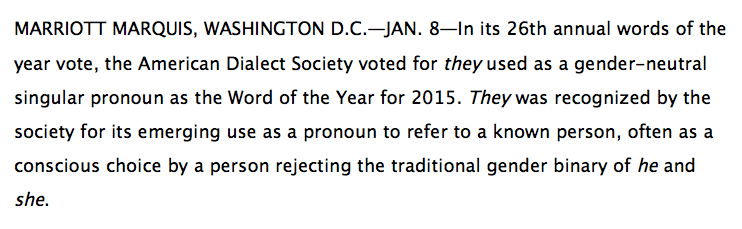

At the top of this page, you’ll find a quote from Shakespeare featuring a sentence-final preposition. My point in including it is to demonstrate that our greatest writers have used the construction. However, you shouldn’t take a writer’s use of something as prima facie evidence that they approve of it–you need to look at who it’s used by (or by whom it’s used, if you prefer). For example, when I translate my own speech from French into English, I typically do so using English in ways that I would never use it if I were speaking English, with the express purpose of trying to communicate how bad my French is: “This wants to say what, égout?,” I asked. I…um…likes Hawaii. It’s OK—I is leaving early today. Jane Austen puts some constructions only into the mouths of people who she wants to portray as idiots. Who says the lines in the quote at the top of this page? Hamlet, the protagonist of what is widely considered to be Shakespeare’s greatest play, the one that you’re likely to have read even if you haven’t read anything else by the man. Point being: it’s tough to argue that Shakespeare put that preposition at the end of the sentence because he didn’t like it.

So, where did this whole “a preposition is a bad thing to end a sentence with” mishegas come from? The linguist Steven Pinker attributes it to the seventeenth-century British poet and literary critic John Dryden, who he says originated it in an excoriation of playwright and poet Ben Jonson‘s work. According to David Thatcher’s book Saving our prepositions, it then found its way into Robert Lowth’s 1762 book Short introduction to English grammar, and insinuated its way into English-language pedagogy from there.

Are there similar phenomena in France–alleged rules that don’t actually reflect at all how the language is used by native speakers? Probably, but I don’t know what they are, and indeed, mixing language from different registers–saying the colloquial je crève d’envie de… (“I’m dying to…”) in a social context in which I should say je meure d’envie de… (also “I’m dying to,” but more appropriate for a formal situation) or failing to say ça me fait égale (“It doesn’t matter to me”) and instead saying je m’en bat les couilles (also “it doesn’t matter to me”, but more literally something like “I bang my balls about it”) —is exactly the kind of thing that I mess up all the time.

I’ll leave you today with another quote from a non-stupid Shakespearean character:

We are such stuff as dreams are made on.

–William Shakespeare, The Tempest

…and, yes, it’s on, not of.